markdown

Self‑Hosted HA Kubernetes Cluster

Home‑grown, production‑grade Kubernetes platform built from the ground up

Project Synopsis

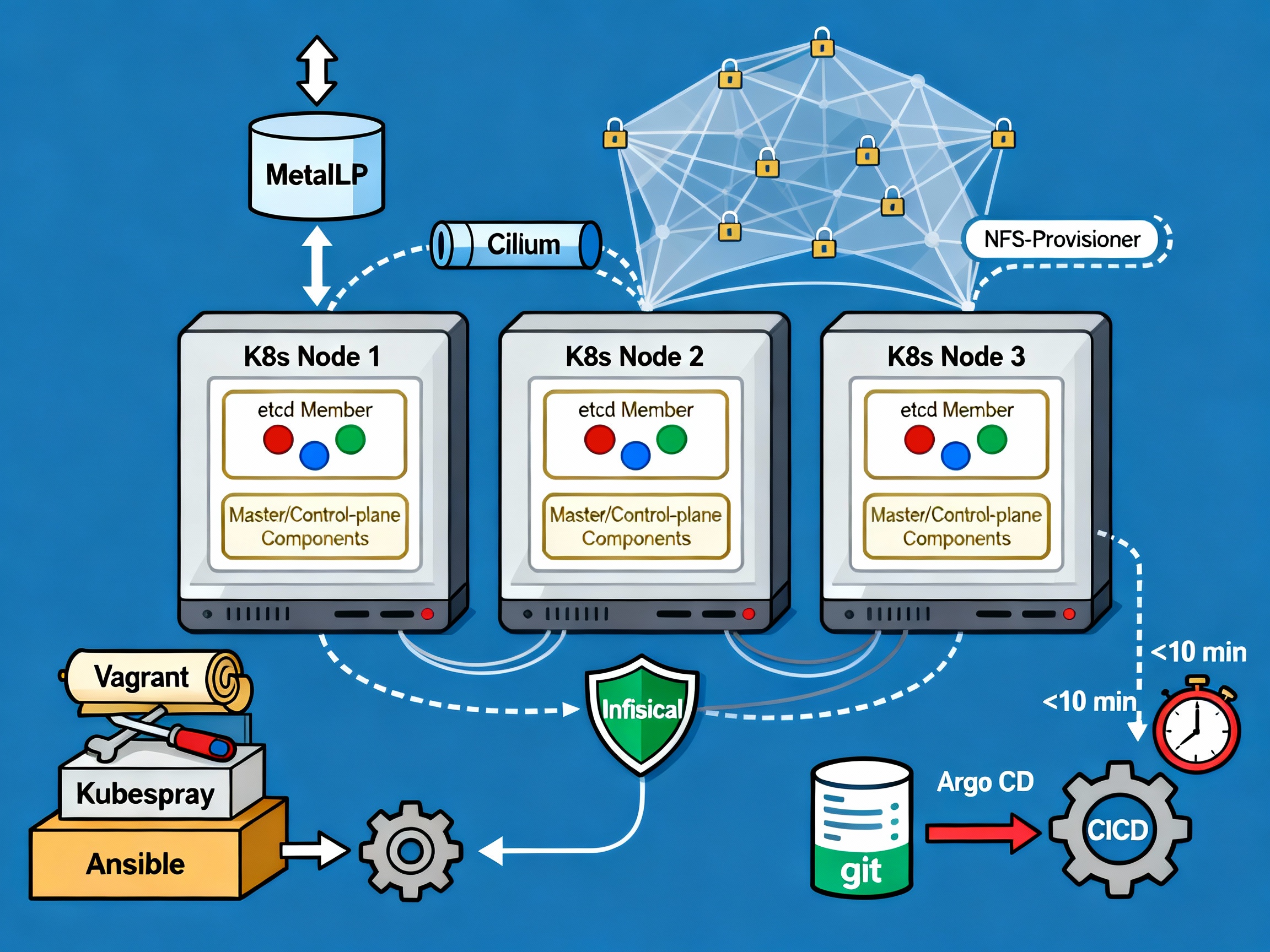

Designed, provisioned, and operated a high‑availability (HA) Kubernetes cluster on two legacy machines (an i5 desktop + 32 GB RAM and an i7 laptop + 16 GB RAM). The whole stack is managed with Infrastructure‑as‑Code (IaC), GitOps, and modern cloud‑native tooling, turning a home lab into a fully functional private cloud that supports dozens of services (Traefik, NFS storage, Argo CD, Infisical, etc.).

Key Highlights

| Area | What I Did | Tools & Techniques | Impact |

|---|---|---|---|

| Hardware & OS | Repurposed old hardware, installed Debian 12, tuned SSH (custom port, key‑only auth, X11 forwarding) | Debian, Zsh + Oh‑My‑Zsh, Tmux | Secure, low‑overhead foundation for the cluster. |

| Virtualisation | Created 4 VMs (2 per host) with 2 CPU and 8 GiB RAM each, using bridged networking so each VM gets its own IP. | KVM, libvirt, Vagrant (Ruby DSL) | Fast provisioning, isolated environments for control‑plane and workers. |

| Cluster Bootstrap | Replaced manual kubeadm steps with Kubespray (Ansible) to install a 2‑node control plane + 3 etcd replicas. | Kubespray, Ansible, Cert‑manager, Cilium CNI, MetalLB (layer‑2) | Fully HA control plane, automatic node scaling, reliable service IP allocation. |

| Load Balancing & Ingress | Deployed MetalLB (layer‑2) as a bare‑metal load balancer, limited to the control‑plane nodes via node selectors. | MetalLB v0.13.9, custom address pool 192.168.xx.10‑50 | Provides stable external IPs for all services without cloud LB. |

| Storage | Set up an NFS share on the primary host and installed the kubernetes‑nfs‑provisioner as the default StorageClass. | NFS, nfs‑subdir‑external‑provisioner | Persistent volumes shared across all worker nodes. |

| Secrets Management | Integrated Infisical via its Kubernetes operator to inject secrets as env variables or files. | Infisical operator, PostgreSQL backend | Centralised, secure secret handling for all apps. |

| GitOps & CI/CD | Installed Argo CD (Helm) to sync manifests from GitHub, using Kustomize for versioning. Fixed sync issues caused by Infisical polling. | Argo CD, Kustomize, GitHub Actions | Zero‑touch deployments; rollout time dropped from hours to minutes. |

| Ingress Router | Adopted Traefik (Helm) with IngressRoute CRDs for URL‑based routing; roadmap to Gateway API. | Traefik, Helm | Flexible, per‑domain routing with automatic TLS (future). |

| Observability (planned) | Future integration of OpenTelemetry, Prometheus, Grafana, Jaeger, and Checkov for security scanning. | — | Will provide full telemetry and compliance. |

| Documentation & Automation | Authored a detailed tutorial (the source of this description) and automated repeatable builds; CI pipeline reduces cluster rebuild from > 3 h to < 2 min. | Markdown, Mermaid diagrams, CI scripts | Knowledge transfer and rapid recovery. |

Why This Project Stands Out

- HA Control Plane – 2 control‑plane nodes + 3 etcd members guarantee quorum and resilience.

- Pure‑IaC Lifecycle – Vagrant + Kubespray + Ansible allow a declarative, version‑controlled infrastructure.

- GitOps‑First – Argo CD continuously reconciles the desired state, cutting deployment time dramatically.

- Production‑Ready Services – Traefik, MetalLB, NFS, and Infisical give the cluster all the plumbing needed for real workloads.

- Self‑Hosted Philosophy – Shows mastery of end‑to‑end cloud stack without relying on any public provider, aligning with modern “edge‑cloud” trends.